from IPython.display import clear_output

import pandas as pd

import numpy as np

import os

import warnings

#from urllib.parse import quote

import cartopy.crs as ccrs

import cartopy.feature as cfeature

from cartopy.mpl.ticker import LongitudeFormatter, LatitudeFormatter

import matplotlib.pyplot as plt

import xarray as xr

warnings.filterwarnings('ignore')Introduction to ERDDAP and Matchup satellite data to animal tracks

Overview

History | Updated Feb 2025

In this tutorial you will learn about ERDDAP data server, access satellite data from ERDDAP server, and match the data with an animal telemetry track.

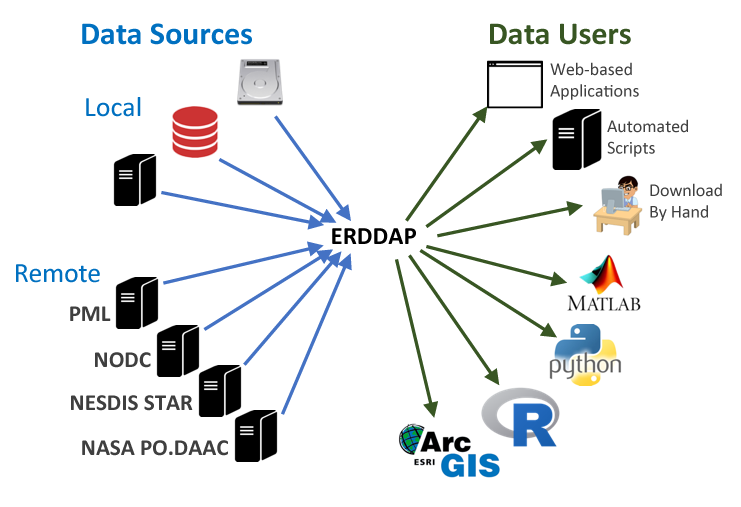

What is ERDDAP

For many users, obtaining the ocean satellite data they need requires downloading data from several data providers, each with its own file formats, download protocols, subset abilities, and preview abilities.

The goal behind ERDDAP is to make it easier for you to get scientific data. To accomplish that goal, ERDDAP acts as a middleman, selectively channeling datasets from remote and local data sources to a single data portal. With ERDDAP as the single-source portal, you have access to a simple, consistent way to download subsets of gridded and tabular scientific datasets in common file formats, with options and make graphs and maps.

Features of ERDDAP:

- Data in the common file format of your choice. ERDDAP offers all data as .html table, ESRI .asc and .csv, Google Earth .kml, OPeNDAP binary, .mat, .nc, ODV .txt, .csv, .tsv, .json, and .xhtml

- ERDDAP can also return a .png or .pdf image with a customized graph or map

- Standardized dates/times (“seconds since 1970-01-01T00:00:00Z” in UTC)

- A graphical interface for humans with browsers

- RESTful web services for machine-to-machine data exchange and downloading data directly into your software applications (e.g.Matlab, R, Python…) and even into web pages.

Understanding the ERDDAP URL

Download requests to ERDDAP are completely defined within a URL, allowing:

- machine-to-machine data exchange,

- bringing data directly into analysis tools,

- and the use ERDDAP as a back end to drive customized online interfaces.

For example, here is the ERDDAP URLs of the monthly chlorophyll-a from European Space Agency’s OC-CCI product: https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2023-03-01)][(89.9792):(-89.9792)][(0.02083):(359.9792)]

We can deconstruct the URL into its component parts:

| Name | Value | Description |

|---|---|---|

| ERDDAP base URL | https://oceanwatch.pifsc.noaa.gov/erddap/griddap/ | Web location of ERDDAP server |

| Dataset ID | esa-cci-chla-monthly-v6-0 | Unique dataset ID |

| Download file | .csv | Data file to download (CSV is this case) |

| Query indicator | ? | Mark start of data query |

| Variable | chlor_a | ERDDAP variable to download |

| Time range | [(2023-02-15):1:(2023-03-01)] | Temporal date range to download |

| Latitude range | [(89.9792):(-89.9792)] | Latitude range to download |

| Longitude range | [(0.02083):(359.9792)] | Longitude range to download |

By reading the URL, you get an idea of the data to be accessed, and you can modify the data request by simply modifying the URL.

List of ERDDAP servers

ERDDAP has been installed by over 100 organizations worldwide. A complete list is available here: https://coastwatch.pfeg.noaa.gov/erddap/download/setup.html#organizations

CoastWatch

* CoastWatch West Coast Node https://coastwatch.pfeg.noaa.gov/erddap/ * CoastWatch PolarWatch Node https://polarwatch.noaa.gov/erddap/index.html * CoastWatch Central Pacific Node https://oceanwatch.pifsc.noaa.gov/erddap/ * CoastWatch Gulf of Mexico Node https://cwcgom.aoml.noaa.gov/erddap/ * CoastWatch Great Lakes Node https://coastwatch.glerl.noaa.gov/erddap/ * CoastWatch Central https://coastwatch.noaa.gov/erddap/

More tutorials on how to use ERDDAP servers

CoastWatch Tutorials- ERDDAP basics: https://github.com/coastwatch-training/CoastWatch-Tutorials/tree/main/ERDDAP-basics

Matchup satellite data to animal tracks

In this exercise you will extract satellite data around a set of points defined by longitude, latitude, and time coordinates, like that produced by an animal telemetry tag, and ship track, or a glider tract.

Please note that there may be more efficient ways, more Pythonic ways, to accomplish the tasks in this tutorial. The tutorial was developed to be easier to follow for less experienced users of Python.

The exercise demonstrates the following techniques:

- Loading data from a tab- or comma-separated file

- Plotting the latitude/longitude points onto a map

- Extracting satellite data along a track

- Building an ERDDAP data-request URL

- Saving results as a CSV file

- Plotting the satellite data onto a map

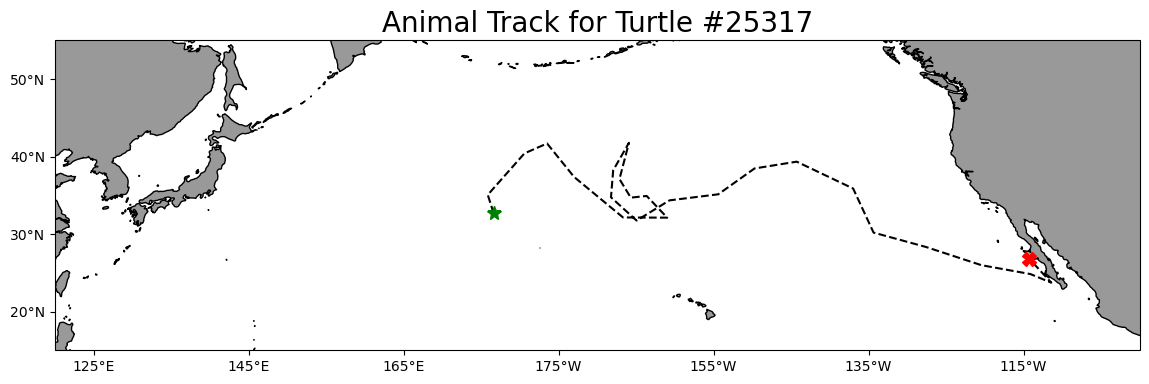

Track data used:

- A loggerhead turtle telemetry track that has been subsample to reduce the data requests needed for this tutorial from over 1200 to 25. The turtle was raised in captivity in Japan, then tagged and released on 05/04/2005 in the Central Pacific. Its tag transmitted for over 3 years and went all the way to the Southern tip of Baja California. The track data can be downloaded from

datafolder in this project folder.

Python packages used:

- pandas (reading and analyzing data)

- numpy (data analysis, manipulation)

- xarray (multi-dimensional data analysis, manipulation)

- matplotlib (mapping)

- cartopy (mapping)

- datetime (date manipulation)

Import the required Python modules

Load the track data into a Pandas data frame

Below, the track data will load using the Pandas “read_csv” method. * Then use the “.head()” method to view the column names and the first few rows of data.

track_path = os.path.join('.',

'data',

'25317_05_subsampled.dat')

df = pd.read_csv(track_path)

print(df.head(2))

print(' ')

print('Data types for each column')

print(df.dtypes)

print(' ')

print('Spatial corrdinate ranges')

print('latitude range', round(df.mean_lat.min(), 2), round(df.mean_lat.max(), 2))

print('longitude range', round(df.mean_lon.min(), 2), round(df.mean_lon.max(), 2)) mean_lon mean_lat year month day

0 176.619433 32.678728 2005 5 4

1 175.860895 35.057734 2005 6 23

Data types for each column

mean_lon float64

mean_lat float64

year int64

month int64

day int64

dtype: object

Spatial corrdinate ranges

latitude range 23.72 41.77

longitude range 175.86 248.57Plot the track on a map

plt.figure(figsize=(14, 10))

# Label axes of a Plate Carree projection with a central longitude of 180:

ax1 = plt.subplot(211, projection=ccrs.PlateCarree(central_longitude=180))

# Use the lon and lat ranges to set the extent of the map

# the 120, 260 lon range will show the whole Pacific

# the 15, 55 lat range with capture the range of the data

ax1.set_extent([120, 260, 15, 55], ccrs.PlateCarree())

# Set the tick marks to be slightly inside the map extents

ax1.set_xticks(range(125, 255, 20), crs=ccrs.PlateCarree())

ax1.set_yticks(range(20, 60, 10), crs=ccrs.PlateCarree())

# Add feature to the map

ax1.add_feature(cfeature.LAND, facecolor='0.6')

ax1.coastlines()

# Format the lat and lon axis labels

lon_formatter = LongitudeFormatter(zero_direction_label=True)

lat_formatter = LatitudeFormatter()

ax1.xaxis.set_major_formatter(lon_formatter)

ax1.yaxis.set_major_formatter(lat_formatter)

# Bring the lon and lat data into a numpy array

x, y = df.mean_lon.to_numpy(), df.mean_lat.to_numpy()

ax1 = plt.plot(x, y, transform=ccrs.PlateCarree(), color='k', linestyle='dashed')

# start point in green star

ax1 = plt.plot(x[0], y[0],

marker='*',

color='g',

transform=ccrs.PlateCarree(),

markersize=10)

# end point in red X

ax1 = plt.plot(x[-1], y[-1],

marker='X',

color='r',

transform=ccrs.PlateCarree(),

markersize=10)

plt.title('Animal Track for Turtle #25317', fontsize=20)

plt.show()

Option 1: Using ERDDAP data-request URL to extract data and mactchup to track

Prepare track data for use in the ERDDAP data-request URL

To build the ERDDAP data-request URLs, we will need: * Dates as strings in a format that ERDDAP can understand, i.e. YYYY-mm-dd.

* The latitude and longitude values need to be converted to strings (characters) not the numerical values found in the mean_lon and mean_lat columns.

Create a formatted date column and change the columns data type

Let’s do that in two steps:

- Reload the “25317_05_subsampled.dat”. This time we will use the “parse_dates” option to create a Pandas date object column (year_month_day) from the ‘year’, ‘month’, and ‘day’ columns.

df = pd.read_csv('./data/25317_05_subsampled.dat',

parse_dates=[['year', 'month', 'day']]

)

print('The new year_month_day column contains the Pandas date objects')

df.head(2)The new year_month_day column contains the Pandas date objects| year_month_day | mean_lon | mean_lat | |

|---|---|---|---|

| 0 | 2005-05-04 | 176.619433 | 32.678728 |

| 1 | 2005-06-23 | 175.860895 | 35.057734 |

- Use the year_month_day column to create a column called “date_str” containing string versions of the date with the format “YYYY-mm-dd”.

- Delete the year_month_day column to keep the data frame smaller

df['date_str'] = df['year_month_day'].dt.strftime('%Y-%m-%d')

# Clean up the data frame a little by deleting the year_month_day column

del df['year_month_day']

print(df.head(2))

print(' ')

print('The time range is:', df.date_str.min(), df.date_str.max()) mean_lon mean_lat date_str

0 176.619433 32.678728 2005-05-04

1 175.860895 35.057734 2005-06-23

The time range is: 2005-05-04 2008-08-16Create string versions of the latitude and longitude data

Create two new columns (mean_lon_str and mean_lat_str) the have the latitude and longitude coordinates as string data types rather than numerical (float) data types found in columns mean_lon and mean_lat.

* The two new columns are mean_lon_str and mean_lat_str

df[['mean_lon_str', 'mean_lat_str']] = df[['mean_lon',

'mean_lat'

]].to_numpy(dtype=str)

print(df.head(2))

print(' ')

print('Data types for each column')

print(df.dtypes) mean_lon mean_lat date_str mean_lon_str mean_lat_str

0 176.619433 32.678728 2005-05-04 176.619432886108 32.6787283689241

1 175.860895 35.057734 2005-06-23 175.860895212552 35.057734124614

Data types for each column

mean_lon float64

mean_lat float64

date_str object

mean_lon_str object

mean_lat_str object

dtype: objectExtract data from a satellite dataset corresponding to points on the track

We are going to download data from an ERDDAP server using the following steps: * Select a dataset on an ERDDAP server * Loop though the track data using the string versions of data, latitude and longitude to build an ERDDAP data-request URL for each row of the track data frame. * Use the ERDDAP data-request URL to download satellite data into Pandas * Add the downloaded data to your track data frame.

Select a dataset

We’ll use the European Space Agency’s Ocean Colour Climate Change Initiative (OC-CCI) product (https://climate.esa.int/en/projects/ocean-colour/) to obtain chlorophyll data. This is a merged product combining data from many ocean color sensors to create a long time series (1997-present).

Ideally we would use a daily dataset, selecting the day correspond the the track data date. However, chlorophyll measurements can have lots of missing data, primarily due to cloud cover. To reduce data gaps and improve the likelihood of data for our matchups, we can use a dataset that combines data monthly averages.

Let’s use data from the monthly version of the OC-CCI datasets.

The ERDDAP URLs to the monthly version is below:

https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0

A note on dataset selection

We have preselected the dataset because we know it will work with this exercise. If you were selecting datasets on your own, you would want to check out the dataset to determine if its spatial and temporal coverages are suitable for your application. Following the link above you will find:

The latitude range is -89.97916 to 89.97916 and the longitude range is 0.020833 to 359.97916, which covers the track latitude range of 23.72 to 41.77 and longitude range of 175.86 to 248.57.

The time range is 1997-09-04 to 2023-03-01 (at the day of this writing), which covers the track time range of 2005-05-04 to 2008-08-16.

You should also note the name of the variable you will be downloading. For this dataset it is “chlor_a” ### Refresher on building the ERDDAP data-request URL To refresh your memory from the ERDDAP Tutorial, a full ERDDAP data-request URL looks like the following: https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2023-03-01)][(89.9792):(-89.9792)][(0.02083):(359.9792)]

We can deconstruct the URL into its component parts:

| Name | Value | Description |

|---|---|---|

| ERDDAP base URL | https://oceanwatch.pifsc.noaa.gov/erddap/griddap/ | Web location of ERDDAP server |

| Dataset ID | aesa-cci-chla-monthly-v6-0 | Unique dataset ID |

| Download file | .csv | Data file to download (CSV is this case) |

| Query indicator | ? | Mark start of data query |

| Variable | chlor_a | ERDDAP variable to download |

| Time range | [(2023-02-15):1:(2023-03-01)] | Temporal date range to download |

| Latitude range | [(89.9792):(-89.9792)] | Latitude range to download |

| Longitude range | [(0.02083):(359.9792)] | Longitude range to download |

We need to construct these components parts for each row of the track data frame and join them together to form the ERDDAP data-request URL.

Building the ERDDAP data-request URL and downloading satellite data

# create a data frame to hold the downloaded satellite data

col_names = ["iso_date", "matched_lat", "matched_lon", "matched_chla"]

# create tot dataframe with the column names

tot = pd.DataFrame(columns=col_names)

# create variables for the unchanging parts of the ERDDAP data-request URL.

base_url = 'https://oceanwatch.pifsc.noaa.gov/erddap/griddap/'

dataset_id = "esa-cci-chla-monthly-v6-0"

file_type = '.csv'

query_start = '?'

erddap_variable = 'chlor_a'

# create the start of the ERDDAP data-request URL by joining URL components

start_url = ''.join([base_url,

dataset_id,

file_type,

query_start,

erddap_variable

])

# Finish each URL and download

for i in range(0, len(df)):

# for each row in the track data frame, create the query part of the ERDDAP data-request URL.

query_url = ''.join([

'[(' + df['date_str'][i] + '):1:(' + df['date_str'][i] + ')]',

'[(' + df['mean_lat_str'][i] + '):1:(' + df['mean_lat_str'][i] + ')]',

'[(' + df['mean_lon_str'][i] + '):1:(' + df['mean_lon_str'][i] + ')]'

])

#encoded_query = quote(query_url, safe='')

# join the start and query parts of the url

url = start_url + query_url

print(i+1, 'of', len(df), url)

# download the data as a CSV file directly into Pandas

new = pd.read_csv(url, skiprows=1)

new.columns = col_names

# load into the holding data frame

tot = pd.concat([tot, new], ignore_index=True)

1 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2005-05-04):1:(2005-05-04)][(32.6787283689241):1:(32.6787283689241)][(176.619432886108):1:(176.619432886108)]

2 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2005-06-23):1:(2005-06-23)][(35.057734124614):1:(35.057734124614)][(175.860895212552):1:(175.860895212552)]

3 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2005-08-12):1:(2005-08-12)][(40.4057593651645):1:(40.4057593651645)][(180.592617770427):1:(180.592617770427)]

4 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2005-10-01):1:(2005-10-01)][(41.6848032419466):1:(41.6848032419466)][(183.510212411605):1:(183.510212411605)]

5 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2005-11-20):1:(2005-11-20)][(37.3662285175569):1:(37.3662285175569)][(186.999746586372):1:(186.999746586372)]

6 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2006-01-09):1:(2006-01-09)][(32.1379277609952):1:(32.1379277609952)][(193.315150592714):1:(193.315150592714)]

7 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2006-02-28):1:(2006-02-28)][(32.1112577913518):1:(32.1112577913518)][(199.01578005525):1:(199.01578005525)]

8 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2006-04-19):1:(2006-04-19)][(34.9122377945573):1:(34.9122377945573)][(196.367856222399):1:(196.367856222399)]

9 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2006-06-08):1:(2006-06-08)][(34.6966077152962):1:(34.6966077152962)][(194.311561551928):1:(194.311561551928)]

10 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2006-07-28):1:(2006-07-28)][(37.0917501942058):1:(37.0917501942058)][(192.85445935735):1:(192.85445935735)]

11 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2006-09-16):1:(2006-09-16)][(41.7693290372383):1:(41.7693290372383)][(194.078757811627):1:(194.078757811627)]

12 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2006-11-05):1:(2006-11-05)][(38.2279593047231):1:(38.2279593047231)][(192.044436165313):1:(192.044436165313)]

13 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2006-12-25):1:(2006-12-25)][(34.7385780752043):1:(34.7385780752043)][(191.760026290605):1:(191.760026290605)]

14 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2007-02-13):1:(2007-02-13)][(31.7396435280445):1:(31.7396435280445)][(195.063134981283):1:(195.063134981283)]

15 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2007-04-04):1:(2007-04-04)][(34.3432418597437):1:(34.3432418597437)][(199.306553405532):1:(199.306553405532)]

16 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2007-05-24):1:(2007-05-24)][(35.1277116865649):1:(35.1277116865649)][(205.605040455239):1:(205.605040455239)]

17 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2007-07-13):1:(2007-07-13)][(38.460826483342):1:(38.460826483342)][(210.280467921659):1:(210.280467921659)]

18 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2007-09-01):1:(2007-09-01)][(39.3374947731784):1:(39.3374947731784)][(215.722452964025):1:(215.722452964025)]

19 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2007-10-21):1:(2007-10-21)][(35.8679284198372):1:(35.8679284198372)][(223.007301112593):1:(223.007301112593)]

20 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2007-12-10):1:(2007-12-10)][(30.1854023141461):1:(30.1854023141461)][(225.638647308816):1:(225.638647308816)]

21 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2008-01-29):1:(2008-01-29)][(28.328588352337):1:(28.328588352337)][(232.406424355302):1:(232.406424355302)]

22 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2008-03-19):1:(2008-03-19)][(25.9810780813157):1:(25.9810780813157)][(239.552975360004):1:(239.552975360004)]

23 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2008-05-08):1:(2008-05-08)][(24.8366188324699):1:(24.8366188324699)][(245.871574770029):1:(245.871574770029)]

24 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2008-06-27):1:(2008-06-27)][(23.7241735345355):1:(23.7241735345355)][(248.571044756):1:(248.571044756)]

25 of 25 https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-monthly-v6-0.csv?chlor_a[(2008-08-16):1:(2008-08-16)][(26.7817714626367):1:(26.7817714626367)][(245.7579071789):1:(245.7579071789)]tot.head(2)| iso_date | matched_lat | matched_lon | matched_chla | |

|---|---|---|---|---|

| 0 | 2005-05-01T00:00:00Z | 32.6875 | 176.604167 | 0.293616 |

| 1 | 2005-07-01T00:00:00Z | 35.0625 | 175.854167 | 0.114716 |

Consolidate the downloaded satellite data into the track data frame

df_chla = df

df_chla[['matched_lat', 'matched_lon', 'matched_chla']] = tot[['matched_lat',

'matched_lon',

'matched_chla'

]]

df_chla.head(2)| mean_lon | mean_lat | date_str | mean_lon_str | mean_lat_str | matched_lat | matched_lon | matched_chla | |

|---|---|---|---|---|---|---|---|---|

| 0 | 176.619433 | 32.678728 | 2005-05-04 | 176.619432886108 | 32.6787283689241 | 32.6875 | 176.604167 | 0.293616 |

| 1 | 175.860895 | 35.057734 | 2005-06-23 | 175.860895212552 | 35.057734124614 | 35.0625 | 175.854167 | 0.114716 |

Save your work

df_chla.to_csv('chl_matchup_turtle25327.csv', index=False, encoding='utf-8')Option 2: Using xarray select function to extract data and mactchup to track

Prepare track data for use to extract satellite data

Create a column with Pandas date objects

Reload the “25317_05_subsampled.dat”. Again we will use the “parse_dates” option to create a Pandas date object column (year_month_day) from the ‘year’, ‘month’, and ‘day’ columns.

df_1 = pd.read_csv(track_path,

parse_dates=[['year', 'month', 'day']]

)

print('The new year_month_day column contains the Pandas date objects')

print(df_1.head(2))

print(df_1.dtypes)The new year_month_day column contains the Pandas date objects

year_month_day mean_lon mean_lat

0 2005-05-04 176.619433 32.678728

1 2005-06-23 175.860895 35.057734

year_month_day datetime64[ns]

mean_lon float64

mean_lat float64

dtype: objectExtract data from a satellite dataset corresponding to points on the track

We are going to download data from an ERDDAP server using the following steps: * Select a dataset on an ERDDAP server * Open the dataset using the Xarray module * Loop though the track data and pull out the date, latitude and longitude coordinates from each row * Insert these coordinates into the Xarray open-dataset object to select and download the satellite data that corresponds to the coordinates. * Store the satellite data in a temporary Pandas data frame * Once all the satellite data has been added to the temporary data frame, merge it with the track data frame.

Select a dataset

This time let’s look at the sea surface temperature data using the NOAA Geo-polar Blended Analysis SST, GHRSST dataset. The ERDDAP URL for the near real time daily GHRSST dataset is:

https://oceanwatch.pifsc.noaa.gov/erddap/griddap/goes-poes-1d-ghrsst-RAN

Open the satellite data in Xarray

- Use the ERDDAP URL with no extension (e.g. without .html or .graph…). This is the OPeNDAP URL, which allows viewing the dataset metadata and, when you select the data you want, downloading the data.

- Use the Xarray “open_dataset” function then view the metadata

erddap_url = '/'.join(['https://oceanwatch.pifsc.noaa.gov',

'erddap',

'griddap',

'goes-poes-1d-ghrsst-RAN'])

ds = xr.open_dataset(erddap_url)

ds<xarray.Dataset>

Dimensions: (time: 8180, latitude: 3600, longitude: 7200)

Coordinates:

* time (time) datetime64[ns] 2002-09-01T12:00:00 ... 2025-02-2...

* latitude (latitude) float32 -89.97 -89.93 -89.88 ... 89.93 89.97

* longitude (longitude) float32 0.025 0.075 0.125 ... 359.9 360.0

Data variables:

analysed_sst (time, latitude, longitude) float32 ...

analysis_error (time, latitude, longitude) float32 ...

mask (time, latitude, longitude) float32 ...

sea_ice_fraction (time, latitude, longitude) float32 ...

Attributes: (12/51)

acknowledgement: NOAA/NESDIS

cdm_data_type: Grid

comment: The Geo-Polar Blended Sea Surface Temperature...

Conventions: CF-1.6, Unidata Observation Dataset v1.0, COA...

creator_email: john.sapper@noaa.gov

creator_name: Office of Satellite Products and Operations

... ...

standard_name_vocabulary: CF Standard Name Table v29

summary: Analyzed blended sea surface temperature over...

time_coverage_end: 2025-02-24T12:00:00Z

time_coverage_start: 2002-09-01T12:00:00Z

title: Sea Surface Temperature, NOAA geopolar blende...

Westernmost_Easting: 0.025Opening the dataset in Xarray lets you look at the dataset metadata.

* The metadata are listed above. * No data is downloaded until you request it.

From the metadata you can view: * The coordinates (time, latitude and longitude) that you will use to select the data to download. * A list of four data variables. For this exercise, we want the “analysed_sst” variable. If you want, you can find out about each variable with clicking the page icon to the right of each variable name.

A note on dataset selection

We have preselected this dataset because we know it will work with this exercise. If you were selecting datasets on your own, you would want to check out the dataset to determine if its spatial and temporal coverages are suitable for your application.

You can find that information above by clicking the right arrow next to “Attribute”. Then look through the list to find: * ‘time_coverage_start’ and ‘time_coverage_end’: the time range * ‘geospatial_lat_min’ and ‘geospatial_lat_max’: the latitude range * ‘geospatial_lon_min’ and ‘geospatial_lon_max’: the longitude range

There are a lot of metadata attributes to look through. We can make it easier with a little code to print out the metadata of interest. Then compare these ranges to those found in your track data.

print('Temporal and spatial ranges of the satellite dataset')

print('time range', ds.attrs['time_coverage_start'],

ds.attrs['time_coverage_end'])

print('latitude range', ds.attrs['geospatial_lat_min'],

ds.attrs['geospatial_lat_max'])

print('longitude range', ds.attrs['geospatial_lon_min'],

ds.attrs['geospatial_lon_max'])

print(' ')

print('Temporal and spatial ranges of the track data')

print('time range', df_1.year_month_day.min(), df_1.year_month_day.max())

print('latitude range',

round(df_1.mean_lat.min(), 2), round(df_1.mean_lat.max(), 2))

print('longitude range',

round(df_1.mean_lon.min(), 2), round(df_1.mean_lon.max(), 2))Temporal and spatial ranges of the satellite dataset

time range 2002-09-01T12:00:00Z 2025-02-24T12:00:00Z

latitude range -89.975 89.975

longitude range 0.025 359.975

Temporal and spatial ranges of the track data

time range 2005-05-04 00:00:00 2008-08-16 00:00:00

latitude range 23.72 41.77

longitude range 175.86 248.57Download the satellite data that corresponds to each track location

# Create a temporary Pandas data frame to hold the downloaded satellite data

col_names = ["erddap_date", "matched_lat", "matched_lon", "matched_sst"]

tot = pd.DataFrame(columns=col_names)

# Finish each URL and download

for i in range(0, len(df)):

clear_output(wait=True)

print(i+1, 'of', len(df))

# Crop the dataset to include data that corresponds to track locations

cropped_ds = ds['analysed_sst'].sel(time=df_1.year_month_day[i],

latitude=df_1.mean_lat[i],

longitude=df_1.mean_lon[i],

method='nearest'

) -273.1 #Kelvin to Degree Celsius

# Downloaded the data and add it to a new line in the tot data frame

tot.loc[len(tot.index)] = [cropped_ds.time.values,

np.round(cropped_ds.latitude.values, 5), # round 5 dec

np.round(cropped_ds.longitude.values, 5), # round 5 dec

np.round(cropped_ds.values, 2) # round 2 decimals

]

print(tot.loc[[len(tot)-1]])

tot.head(2)25 of 25

erddap_date matched_lat matched_lon matched_sst

24 2008-08-16 12:00:00 26.775 245.774994 25.6| erddap_date | matched_lat | matched_lon | matched_sst | |

|---|---|---|---|---|

| 0 | 2005-05-04 12:00:00 | 32.674999 | 176.625 | 16.97 |

| 1 | 2005-06-23 12:00:00 | 35.075001 | 175.875 | 17.65 |

Consolidate the downloaded satellite data into the track data frame

df_sst = df_1

df_sst[['matched_lat',

'matched_lon',

'matched_sst',

'erddap_date']] = tot[['matched_lat',

'matched_lon',

'matched_sst',

'erddap_date']]

df_sst.head(2)| year_month_day | mean_lon | mean_lat | matched_lat | matched_lon | matched_sst | erddap_date | |

|---|---|---|---|---|---|---|---|

| 0 | 2005-05-04 | 176.619433 | 32.678728 | 32.674999 | 176.625 | 16.97 | 2005-05-04 12:00:00 |

| 1 | 2005-06-23 | 175.860895 | 35.057734 | 35.075001 | 175.875 | 17.65 | 2005-06-23 12:00:00 |

Save your work

df_sst.to_csv('sst_matchup_turtle25327.csv', index=False, encoding='utf-8')Plot chlorophyll and SST matchup data onto a map

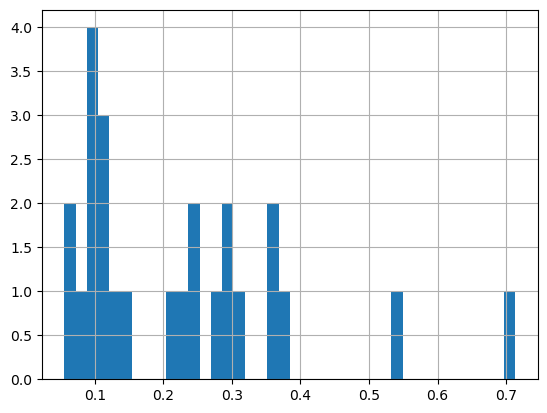

First plot a histogram of the chlorophyll data

print('Range:', df_chla.matched_chla.min(), df_chla.matched_chla.max())

_ = df_chla.matched_chla.hist(bins=40) Range: 0.05568646 0.7134876

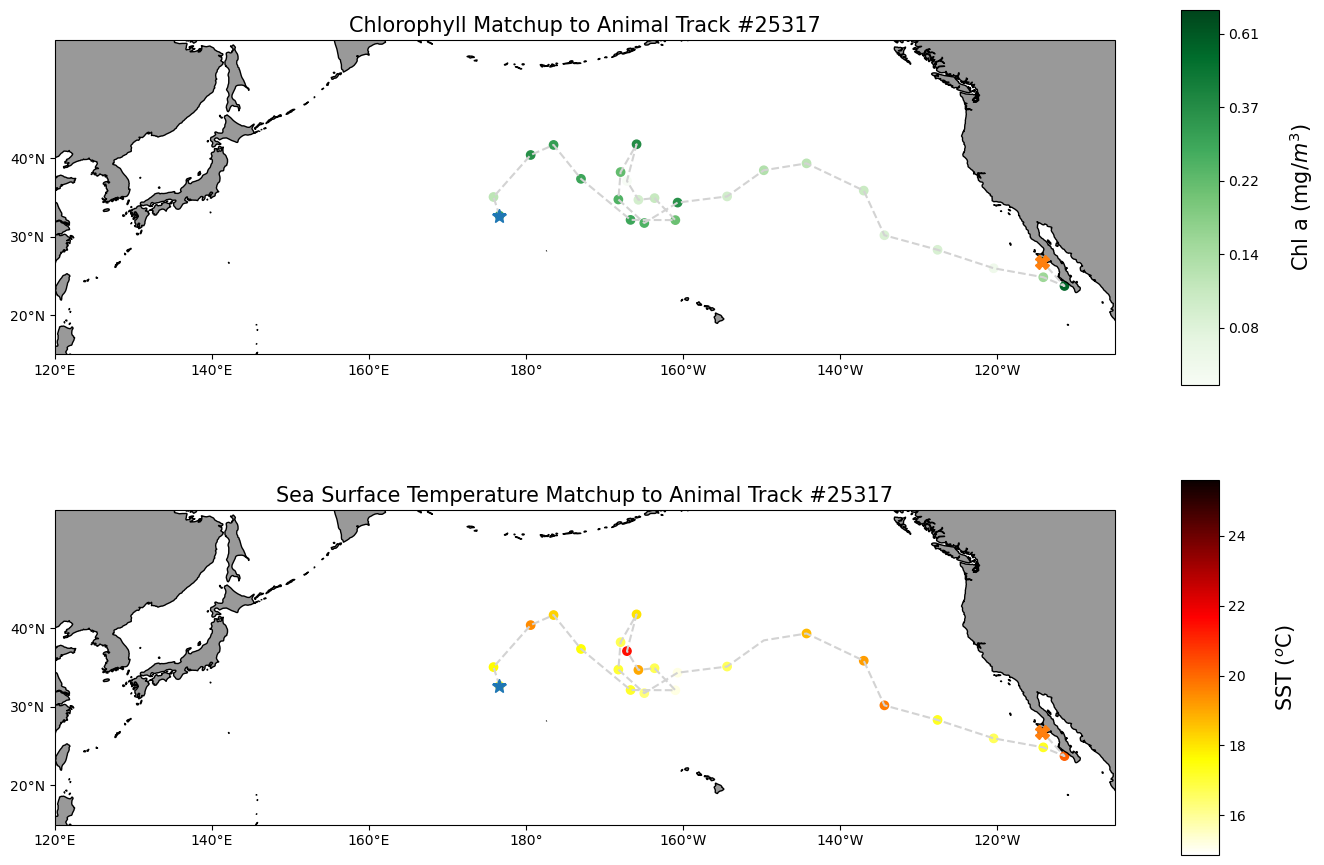

Near the Transition Zone Chlorophyll Front (0.2mg/m\(^3\)), a foraging ground for many preditor species in the North Pacific.

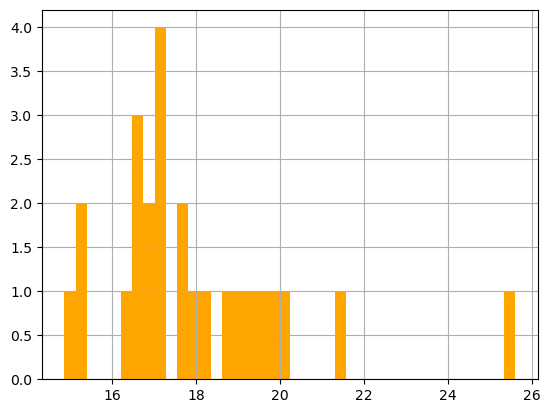

Plot a histogram of the SST data

print('Range:', df_sst.matched_sst.min(), df_sst.matched_sst.max())

_ = df_sst.matched_sst.hist(bins=40,color='orange') Range: 14.86 25.6

Largely within the thermal habitat for Loggerhead sea turtle, i.e., 16.5-18.5 \(^o\)C (63.5-65.5F) in the North Pacific.

Map the chlorophyll and SST data

plt.figure(figsize=(14, 10))

#plot chl-a matchup

# Label axes of a Plate Carree projection with a central longitude of 180:

# set the projection

ax1 = plt.subplot(211, projection=ccrs.PlateCarree(central_longitude=180))

# Use the lon and lat ranges to set the extent of the map

# the 120, 260 lon range will show the whole Pacific

# the 15, 55 lat range with capture the range of the data

ax1.set_extent([120,255, 15, 55], ccrs.PlateCarree())

# set the tick marks to be slightly inside the map extents

ax1.set_xticks(range(120,255,20), crs=ccrs.PlateCarree())

ax1.set_yticks(range(20,50,10), crs=ccrs.PlateCarree())

# Add geographical features

ax1.add_feature(cfeature.LAND, facecolor='0.6')

ax1.coastlines()

# format the lat and lon axis labels

lon_formatter = LongitudeFormatter(zero_direction_label=True)

lat_formatter = LatitudeFormatter()

ax1.xaxis.set_major_formatter(lon_formatter)

ax1.yaxis.set_major_formatter(lat_formatter)

# build and plot coordinates onto map

x,y = list(df_chla.mean_lon),list(df_chla.mean_lat)

ax1 = plt.plot(x, y, transform=ccrs.PlateCarree(), color='lightgray',linestyle='dashed')

ax1 = plt.scatter(x, y, transform=ccrs.PlateCarree(),

marker='o',

c=np.log(df_chla.matched_chla),

cmap=plt.get_cmap('Greens')#BuGn')

)

ax1=plt.plot(x[0],y[0],marker='*', transform=ccrs.PlateCarree(), markersize=10)

ax1=plt.plot(x[-1],y[-1],marker='X', transform=ccrs.PlateCarree(), markersize=10)

# control color bar values spacing

levs2 = np.arange(-2.5, 0, 0.5)

cbar=plt.colorbar(ticks=levs2, shrink=0.75, aspect=10)

cbar.set_label("Chl a (mg/$m^3$)", size=15, labelpad=20)

# set the labels to be exp(levs2) so the label reflect values of chl-a, not log(chl-a)

cbar.ax.set_yticklabels(np.around(np.exp(levs2), 2), size=10)

plt.title("Chlorophyll Matchup to Animal Track #25317", size=15)

#plot sst matchup

# set the projection

ax2 = plt.subplot(212, projection=ccrs.PlateCarree(central_longitude=180))

# Use the lon and lat ranges to set the extent of the map

# the 120, 260 lon range will show the whole Pacific

# the 15, 55 lat range with capture the range of the data

ax2.set_extent([120,255, 15, 55], ccrs.PlateCarree())

# set the tick marks to be slightly inside the map extents

ax2.set_xticks(range(120,255,20), crs=ccrs.PlateCarree())

ax2.set_yticks(range(20,50,10), crs=ccrs.PlateCarree())

# Add geographical features

ax2.add_feature(cfeature.LAND, facecolor='0.6')

ax2.coastlines()

# format the lat and lon axis labels

lon_formatter = LongitudeFormatter(zero_direction_label=True)

lat_formatter = LatitudeFormatter()

ax2.xaxis.set_major_formatter(lon_formatter)

ax2.yaxis.set_major_formatter(lat_formatter)

# build and plot coordinates onto map

x,y = list(df_sst.mean_lon),list(df_sst.mean_lat)

ax2 = plt.plot(x, y, transform=ccrs.PlateCarree(), color='lightgray',linestyle='dashed')

ax2 = plt.scatter(x, y, transform=ccrs.PlateCarree(),

marker='o',

c=df_sst.matched_sst,

cmap=plt.get_cmap('hot_r')

)

ax2=plt.plot(x[0],y[0],marker='*', transform=ccrs.PlateCarree(), markersize=10)

ax2=plt.plot(x[-1],y[-1],marker='X', transform=ccrs.PlateCarree(), markersize=10)

# plot colorbar

cbar=plt.colorbar(shrink=0.75, aspect=10)

cbar.set_label("SST ($^o$C)", size=15, labelpad=20)

plt.title("Sea Surface Temperature Matchup to Animal Track #25317", size=15)

plt.tight_layout()

plt.show()

On your own!

Exercise 1:

Repeat the steps above with a different dataset. For example, use the weekly version of the OC-CCI dataset to see how cloud cover can reduce the data you retrieve. https://oceanwatch.pifsc.noaa.gov/erddap/griddap/esa-cci-chla-8d-v6-0.html * This dataset is a different ERDDAP; It has the same base URL and variable name, but a different dataset ID.

Exercise 2:

Go to an ERDDAP of your choice, find a dataset of interest, generate the URL, copy it and edit the script above to run a match up on that dataset. To find other ERDDAP servers, you can use this search engine: http://erddap.com/

* This dataset will likely be on a different base URL and dataset ID and variable name. * Check the metadata to make sure the dataset covers the spatial and temporal range of the track dataset.